History of numerical weather prediction

The history of numerical weather prediction (NWP) at the Met Office, from the early 1950s to present.

NWP uses the latest weather observations alongside a mathematical computer model of the atmosphere (such as the Unified Model run at the Met Office) to produce a weather forecast.

The Met Office is recognised as a world leader in NWP, dating back to the pioneering work of Lewis Fry Richardson after he joined the Met Office in 1913. The Met Office produced its first operational computer forecast on 2 November 1965.

NWP is now at the heart of all weather forecasts and warnings as well as much of the research and development undertaken to understand our weather and climate.

1952 to 1965 - The start of NWP in the UK

Early experiments

The first NWP forecast in the UK was completed by Fred H. Bushby and Mavis K. Hinds in 1952 under the guidance of John S. Sawyer FRS. These experimental forecasts were generated using a 12 × 8 grid with a grid spacing of 260 km, a one hour time-step, and required four hours of computing time for a 24 hour forecast on the EDSAC computer at Cambridge and the LEO computer of Lyons Co.

Following these initial experiments, work moved to the Ferranti Mark 1 computer at the Manchester University Department of Electrical Engineering.

Meteor

In January 1959, a Ferranti Mercury computer, known as ‘Meteor’, was installed at the Met Office in Dunstable. This was a significant milestone due to it being the first computer dedicated to NWP research.

Meteor was used to trial an operational forecasting suite in early 1960, using the improved Bushby–Whitelam three level model covering western Europe and the North Atlantic.

It consisted of a six hour forecast from an 0000 UTC analysis, followed by a 0600 UTC re-analysis and a 24 hour forecast. The forecasts took too long to run and it was concluded that a more powerful and reliable computer was required.

Comet

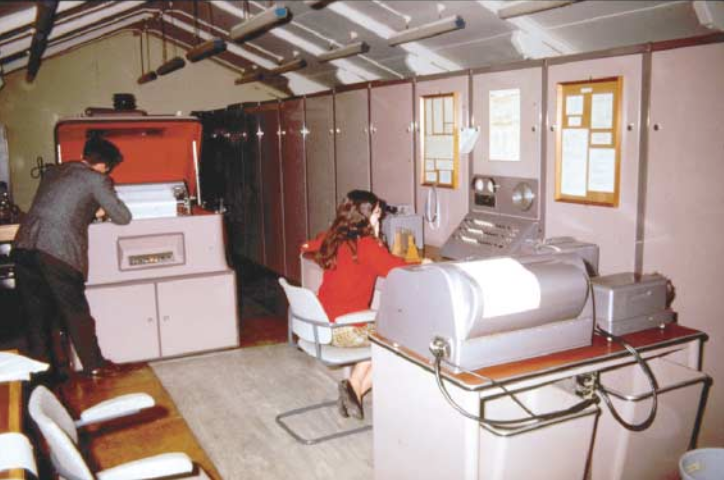

The computer which replaced Meteor was the English Electric KDF9, called ‘Comet’, which was installed at the Met Office in Bracknell in 1965 and cost £500,000.

Comet used transistors, had a speed of 60,000 arithmetic operations per second, a memory of 12,000 numbers and could output charts in both zebra form on a line printer and later on a pen plotter.

1965 onwards - Operational NWP forecasts at the Met Office

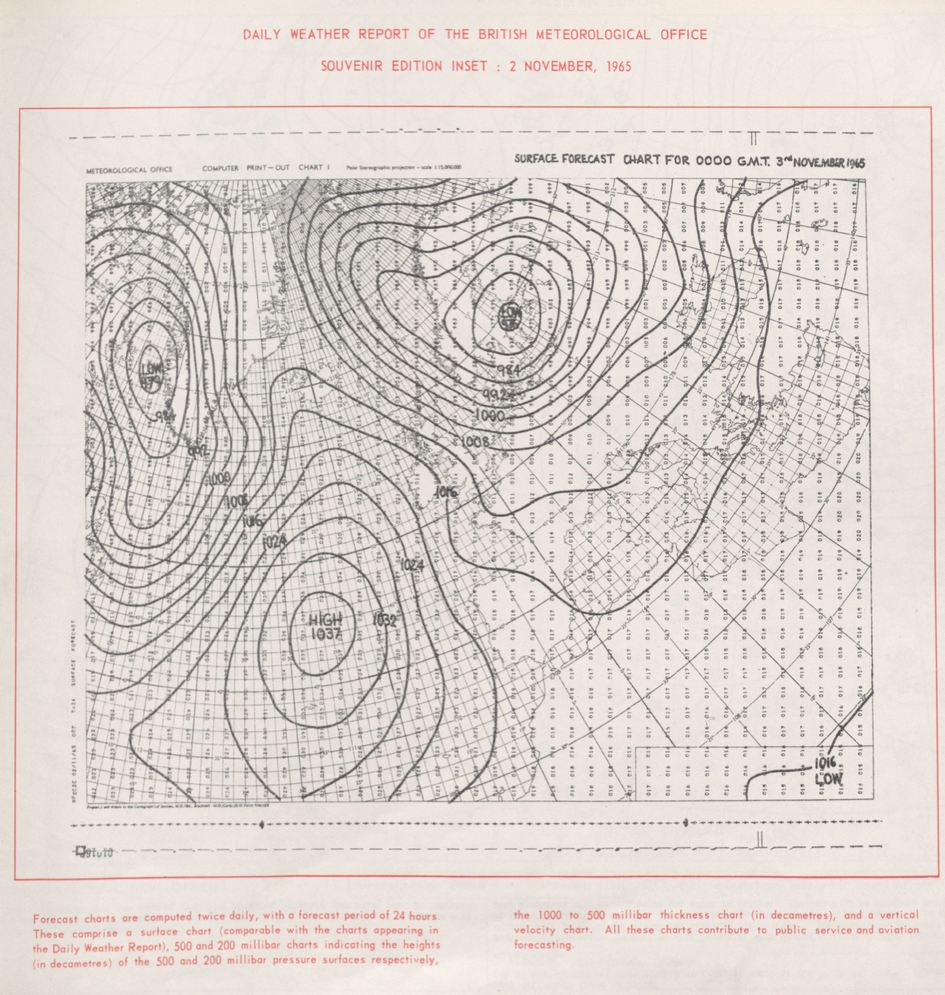

The first operational computer forecast was produced by the Met Office on 2 November 1965, using the Comet computer. The media were invited to witness this landmark in the history of the Met Office and gave it unprecedented coverage both in the press and on TV.

Above: The first operational NWP forecast produced by the Met Office on 2 November 1965. The forecast shows surface pressure valid at 0000 GMT on 3 November 1965.

Comet provided the capability to produce forecasts twice a day using the three level dry filtered Bushby-Whitelam model covering the North Atlantic and Western Europe on a 300 km horizontal grid length at 60°N. Initial statistics were produced using a local surface fitting method for the height field and the balance equation for winds.

Above: The English Electric KDF9 'Comet' computer at the Met Office (Bracknell) in 1966.

1970s - Global exchange of weather observations

The global exchange of weather observational data grew rapidly as the Global Telecommunications System of the World Meteorological Organisation (WMO) transformed communication between National Weather Services and satellite data began to be exchanged. As one of the hubs on the Main Trunk Network (MTN), the Met Office installed a pair of Marconi Myriad computers dedicated to message routing.

1971 - IBM 360/195 computer

The IBM 360/195 computer was used between 1971 and 1982. It had a speed of 4 million arithmetic operations per second and a memory of 250,000 numbers, and was installed in the newly built Richardson Wing of the Met Office headquarters.

Unlike its predecessor, this computer was constructed using integrated circuits. Forecasters could interact with the computer through an interactive screen and keyboard. A microfilm plotter provided a new, flexible, output medium.

Above: A view of the computer hall housing the IBM 360/195 at the Met Office (Bracknell) in 1971.

1972 - 10-level model

The 10-level Bushby-Timpson model, was based on a scheme formulated by J. S. Sawyer, solved the Navier–Stokes equations of fluid motion, and the thermodynamic, heat transfer and continuity equations, in their primitive forms, obviating the need for the geostrophic assumption.

It was implemented in 1972, first in the Octagon model, with a 300 km grid length at 60oN and was run twice daily for three day Northern Hemisphere forecasts.

A year later the Rectangle model was implemented, with a 100 km grid covering much of the North Atlantic and Europe (shown by the darker grey shading in image on the right). This had the specific aim of predicting rainfall from fronts.

The models were initialised by fitting orthogonal polynomial surfaces through height observations and then deriving winds using the balance and omega equations. Radiosondes were the main observation source, supplemented by AIREPS and the first satellite observations: SIRS & SATEMS.

1976 - Ocean wave forecasts

The Met Office involvement in numerical wave forecasting dates from 1974, when provision of weather services to the developing North Sea oil industry needed support in the prediction of long period swell.

A first generation model was implemented in 1976, followed by a more sophisticated second generation model in 1979. This remained the foundation of Met Office ocean wave services until 2008, when the third generation US WAVEWATCH III® model was implemented.

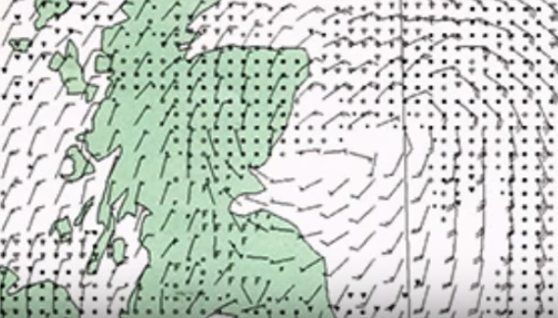

Above: One of the early ocean wave forecasts.

1980s - Forecaster guidance

During the 1980s, NWP output reached forecasters in the Central Forecast Office, through interactive monitors and on charts produced by large flat-bed plotters, while communications advances enabled NWP outputs to be sent to forecasting outstations through document facsimile (GRAFNET) and the Outstation Display System (ODS).

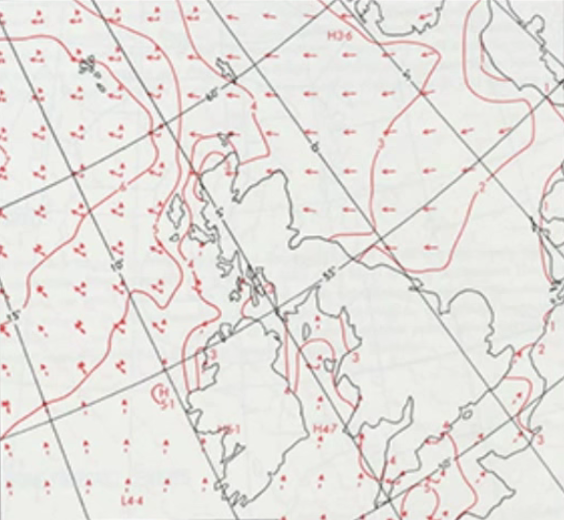

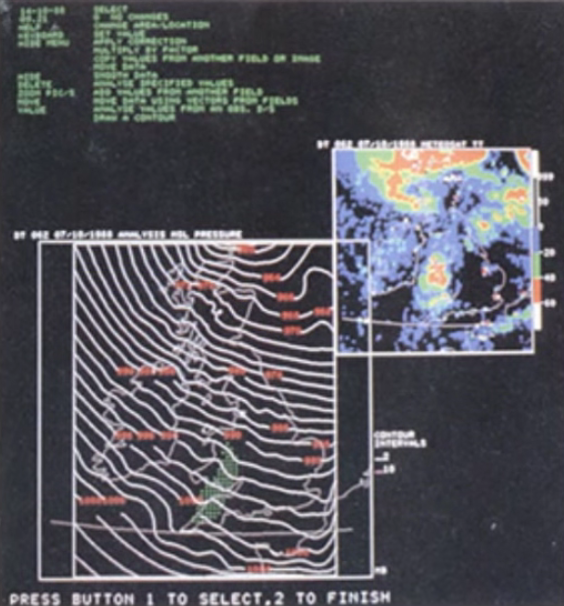

Above: One of the interactive monitors in the Central Forecast Office during the 1980s.

Above: How the first forecaster guidance used to look in the 1980s, as displayed on interactive monitors.

1982 - Global capability

A new 15-level NWP model was implemented in 1982 using a development of the 10-level model dynamical core for a latitude-longitude grid with physical parametrizations derived from the General Circulation Model developed for research during the First GARP Global Experiment (FGGE) in the late 1970s.

It was rapidly extended to a global capability to provide military support following the invasion of the Falkland Islands in 1982.

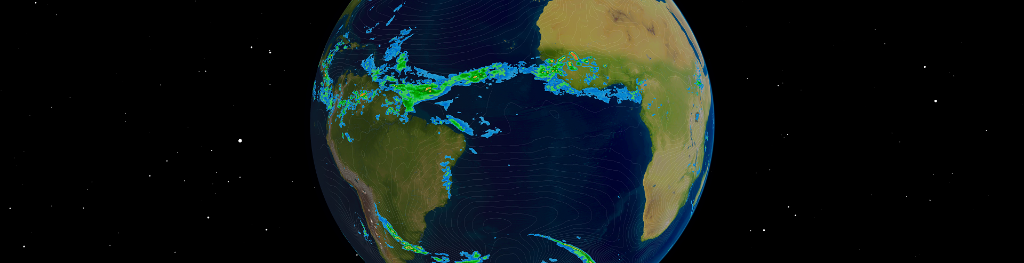

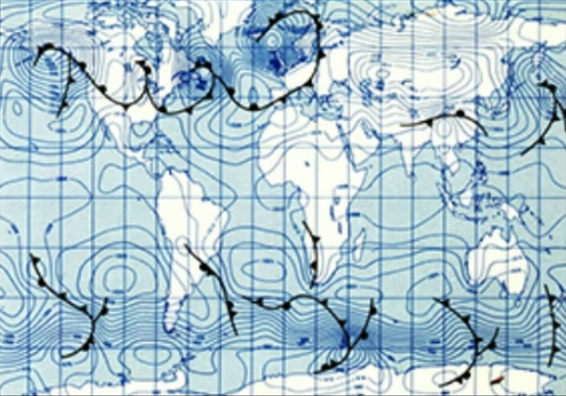

It used an optimum interpolation data assimilation scheme, combined with nudging, also used in FGGE. An example of an early global NWP forecast is shown in the image on the right.

1985 - Mesoscale NWP

In order to remove limitations on the representation of vertical circulations associated with convection and complex topography, a new higher-resolution model was developed from the early 1970s using a non hydrostatic, compressible equation set with a semi-implicit treatment of sound waves (Tapp and White 1976).

It was implemented for routine weather forecasting use in 1985 and was the first such model to be used operationally in the world. It covered just the UK and was aimed at forecasting topographically forced local weather such as sea breeze convection and fog.

The parametrizations of cloud and turbulence were much more sophisticated than those used in the global and regional models of the time. The model was initialised by downscaling from the regional model and then adding local detail from radar, satellite and surface observations using the Interactive Mesoscale Initialisation (IMI). An example of its output is shown in the image above.

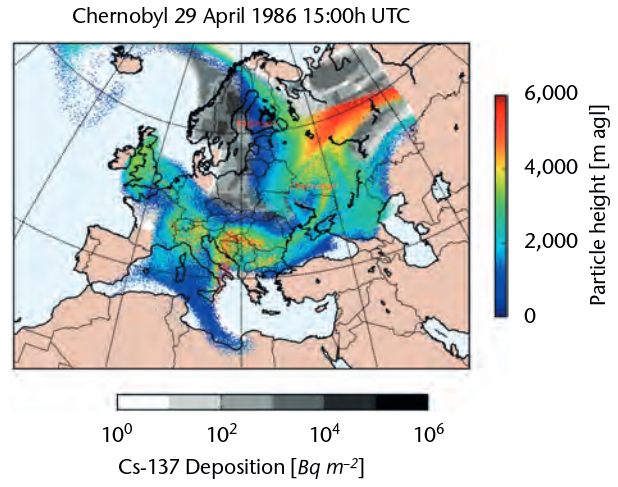

1986 - Chernobyl and NAME

Following the widespread dispersion of the radioactive cloud from the Chernobyl accident in 1986, the NAME dispersion modelling system was initially developed.

NAME has subsequently been continuously developed and used to model dispersion from an even wider range of hazards including chemical fires, volcanic eruptions and disease outbreaks.

Above: A dispersion forecast of the radioactive plume following the Chernobyl accident in 1986, using a modern version of NAME.

1990 - The Unified Model

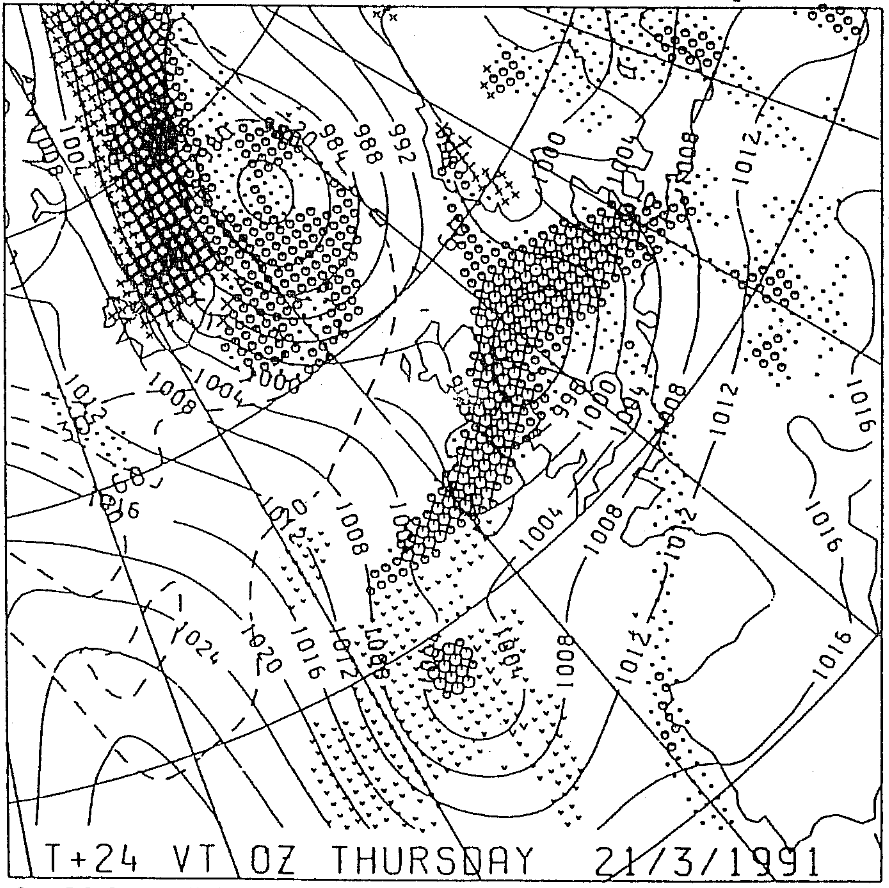

The Unified Model is a numerical model of the atmosphere which is used for both weather and climate applications. Real time testing was carried out in 1990, with the operational implementation on 12 June 1991.

The Unified Model was set up with a global and limited area model for short range forecasts and the ability to run long range climate simulations. At the time of implementation, the global model for short range forecasts had a horizontal resolution of 0.833 degrees latitude × 1.25 degrees longitude (~90 km), the global model for climate simulations had a horizontal resolution of 2.5 degrees latitude × 3.75 degrees longitude (~300 km). The initial implementation also had a set of standard model levels in the vertical, with 20 levels used for short range forecasts and 42 levels used for global climate simulations.

Above: An early example of a 24 hour forecast showing mean sea level pressure and precipitation from the limited area version of the Unified Model, valid at 00 UTC on 21 March 1991. From Cullen (1993).

The Unified Model is in continuous development by the Met Office and its partners, adding state of the art understanding of atmospheric processes to new releases. For example, the operational global resolution run at the Met Office improved from 90 km in 1991 to 10 km in 2017. In a second example, the data assimilation scheme was upgraded to 3D-Var in 1999, to 4D-Var in 2004 and now uses an ensemble to provide information on the model first guess error structure. In a final example, in 2003 the Unified Model was upgraded with a compressible, non-hydrostatic, dynamical core. More recently, it has seen further upgrades with the more accurate ENDGame core.

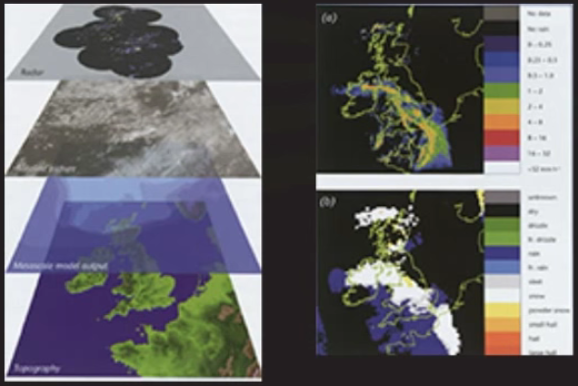

1995 - Nowcasting

The FRONTIERS man-machine nowcasting system for rainfall, based on radar and satellite data, was first implemented in the late 1980s.

Its fully automated successor was Nimrod, implemented in 1995, which provided detailed forecasts of a variety of weather elements for the first few hours ahead.

Above: Example forecast output from the Nimrod nowcasting system in the mid 1990s.

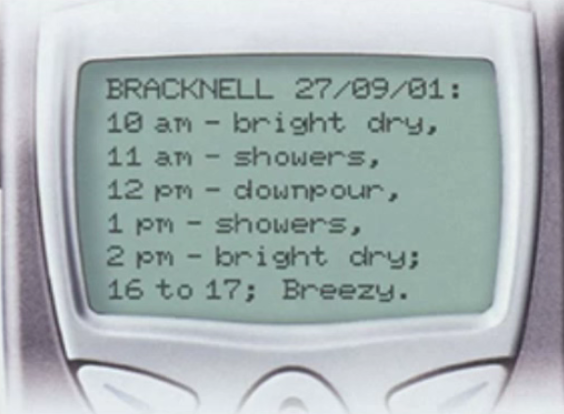

2000 - Site specific forecasts

Initial attempts to produce automatic worded site-specific forecasts were made in the 1970s using gridpoint output from the Rectangle model. Account of local topography and land use was addressed using the site-specific forecast model (SSFM) in the late 1990s.

The advent of mobile communications made delivery of high density local forecasts realistic and the Met Office launched the Time and Place service, delivered using the WAP mobile phone protocol, in 2000.

Above: An example site specific forecast from 2001 presented on a mobile phone.

With current high resolution NWP, forecasts for thousands of UK and global locations are now generated by diagnostic downscaling, and are available to view on our website and weather app.

2003 - Moving the supercomputer

When the Met Office moved from Bracknell to Exeter in 2003 it involved one of the largest IT relocations ever attempted, including the physical transport of the existing supercomputers (Cray T3E supercomputers; shown below).

Two computers were moved separately with one maintaining forecasts in Bracknell until the other took over in Exeter. The move was completed two weeks early without a single forecast missed.

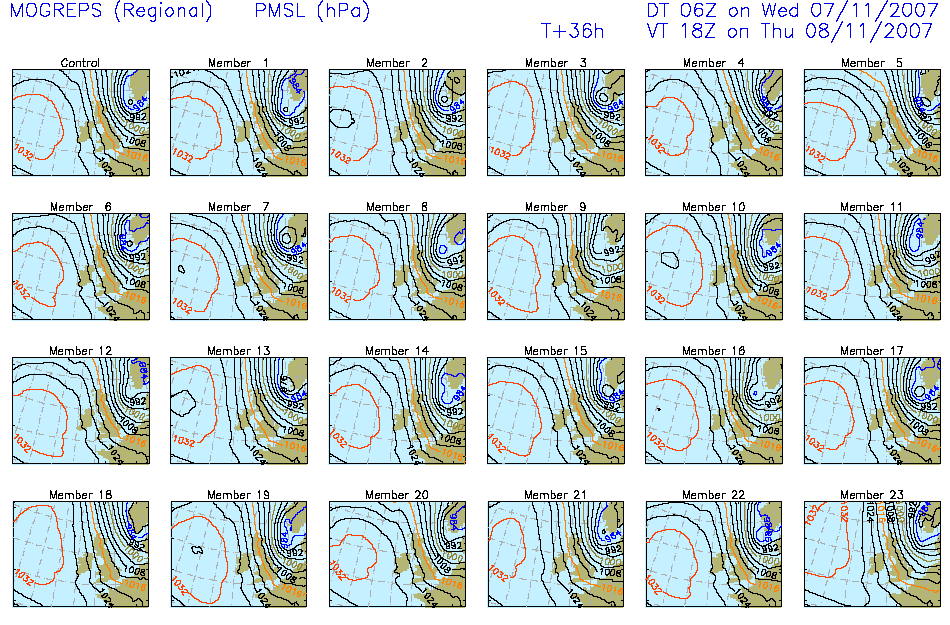

2005 - Ensemble forecasting

Following early work on seasonal ensemble forecasting in the late 1980s, the Met Office concentrated on interpreting ECMWF medium range ensemble forecasts.

However, when the value of ensemble forecasts at shorter ranges became evident, the Met Office Global and Regional Ensemble Prediction System (MOGREPS) was implemented in 2005, becoming fully operational in 2007 (Bowler et al., 2008).

Above: A 36 hour forecast from each ensemble member within the regional component of MOGREPS, ahead of the East Coast storm surge on 9 November 2007.

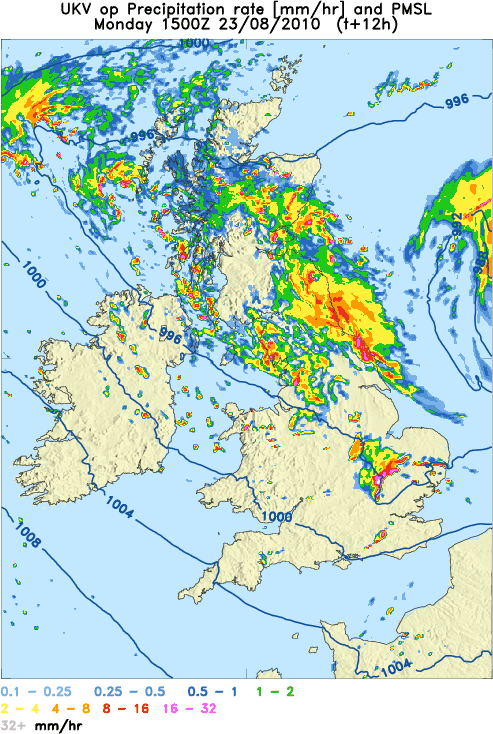

2010 - UK convective scale model

Following earlier work with a 4 km grid-length model, the first truly convection-permitting UK model was introduced in 2010, with one of the early operational forecasts shown below.

Above: One of the first operational convection-permitting forecasts in 2010.

This model is still in use today and has a variable horizontal resolution, leading it to be known as the 'UKV' model. The variable grid-length gives a 1.5 km grid resolution over the British Isles, providing unprecedented detail of local weather including convection (Tang et al., 2012).

The main benefit of the UKV is to better resolve convective showers or storms which, in extreme cases, can give rise to major flooding events or disruptive snow in winter.

Convective clouds are typically less than 10 km in horizontal extent and so have to be represented as subgrid processes at global model resolutions. In contrast, a 1.5 km model can often produce the convection explicitly on the model grid.

2012 - Convection permitting ensemble

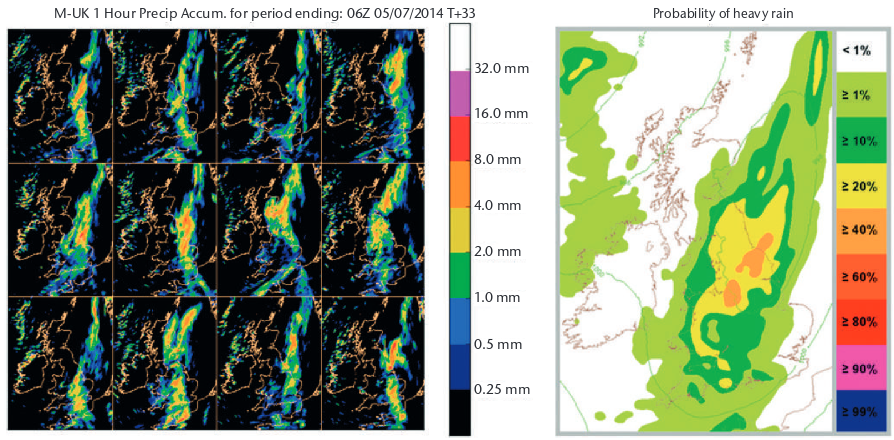

Recognising that convective scale detail has limited predictability due to short length scales and rapid chaotic error growth, the UKV model was complemented with a 2.2 km UKV-based ensemble, MOGREPS-UK, which was first demonstrated for the London 2012 Olympic Games (Golding et al., 2014 and Tennant, 2015).

Above: Example of using MOGREPS-UK to capture the uncertainties in exactly where heavy rainfall will occur across the UK associated with a frontal system. Left image: rainfall forecasts from the 12 MOGREPS-UK ensemble members. Right image: probability of heavy rainfall, derived by neighbourhood processing the 12 ensemble members.

2015 to 2017 - Cray XC40 supercomputer

The first part of the Met Office’s latest supercomputer arrived in 2015 and was fully installed by 2017.

Now complete, this new computer (shown below) has 460,000 cores which delivers a peak performance of 16 petaflops. It has 2 petabytes of memory for running complex calculations and 24 petabytes of storage for saving data.

The increased power enables even higher resolution forecasts. Experiments at resolutions finer than 1 km are already showing amazingly accurate detail, while refinements to our longer range forecasts are reproducing the global scale circulation changes that influence the character of our seasonal weather.

References

Bowler N, Arribas A, Mylne K, Robertson K, Beare S. 2008. The MOGREPS short-range ensemble prediction system. Q. J. R. Meteorol. Soc. 134: 703–722

Cullen MJP. 1993. The unified forecast/climate model. Meteorol. Mag. 112: 81–9

Golding BW, Ballard SP, Mylne K, Roberts N, Saulter A, Wilson C, Agnew P, Davis LS, Trice J, Jones C, Simonin D, Li Z, Pierce C, Bennett A, Weeks M, Moseley S. 2014. Forecasting Capabilities for the London 2012 Olympics. Bull. Am. Meteorol. Soc. 95: 883–896.

Golding BW, Mylne K and Clark P. 2004. The history and future of numerical weather prediction in the Met Office. Weather. 59: 299–306

Hinds MK. 1981. Computer story. Meteorol. Mag. 110: 69–81

Lynch P. 2007. The origins of computer weather prediction and climate modeling. Journal of Computational Physics. 227: 3431–3444

Tang Y, Lean H, Bornemann J. 2012. The benefits of the Met Office variable resolution NWP model for forecasting convection. Meteorological Applications. 20: 417–426

Tapp MC and White PW. 1976. A non-hydrostatic mesoscale model. Q. J. R. Meteorol. Soc. 102: 277–296

Tennant W. 2015. Improving initial condition perturbations for MOGREPS-UK. Q. J. R. Meteorol. Soc. 141: 2324–2336